Using Grants to Transform Student Experience and Outcomes at Two-Year HSIs

Melo-Jean Yap, Ph.D., San Diego State University, San Diego, CA

Community colleges are understudied, yet a great site to focus on since students are still in the beginning of their STEM educational career trajectory. They provide a “critical access point” especially for Latinx students and other students of color studying STEM fields [1]. In the University of California, almost 50% of STEM bachelor’s degree graduates were transfer students and a quarter of all Chicanx doctorate holders were also transfer students [2]. There is a promise for the largely Black, Indigenous, and People of Color (BIPOC) community college student population but lack of enhancing equity and diversity in community college Biology Education Research [3]. Fortunately, through a new initiative from the National Science Foundation (NSF), I have received an opportunity—and my first big grant–to contribute to the theoretical and practical knowledge on centering the experience of women of color STEM majors in the community college in hopes of providing viable support to this underrepresented group in their career pathways.

Supported through the NSF’s EHR Core Research Building Capacity in STEM Education Research, I study the STEM trajectories of this underrepresented group who start their STEM education at two-year public colleges, especially Hispanic-Serving Institutions (HSIs). This grant has enabled me to engage in professional development activities that have taught me how to use technology in performing rigorous STEM education research, such as advanced statistical analyses and social network analysis in high-tech software such as R and Tableau. Learning using these technology advance not just my own skills as a scholar and professional but also the sophistication of how we approach conceptualizing the experience of women of color community college students, especially as producers of novel knowledge–truly impacting broadening participation of BIPOC in the STEM fields. I highly encourage community college faculty, staff, and administrators, most especially at HSIs, to pursue similar grants that allow one to develop high-tech abilities. Doing so can transform how we think about studying students in HSIs and informing policy and practice of improving student success outcomes.

1] Herrera, F.A., Kovats Sánchez, G., Navarro, M., & Zeldon, M. J. (2018). Latinx students in STEM college pathways: A closer look at diverse pathways through Hispanic Serving Institutions (HSIs). In T. T. Yuen, E. Bonner, & M. G. Arreguín-Anderson (Eds.), (Under)Represented Latin@s in STEM: Increasing Participation Throughout Education and the Workplace. New York: Peter Lang Publishing.

[2] Community College League of California. (2015). Fast Facts 2015. Sacramento, CA: Author.

[3] Schinske, J.N., Balke, V.L., Gita Bangera, M., Bonney, K.M., Brownell, S.E., Carter, R.S., Curran-Everett, D., Dolan, E.L., Elliott, S.L., Fletcher, L., Gonzalez, B., Gorga, J.J., Hewlett, J.A., Kiser, S.L., McFarland, J.L., Misra, A., Nenortas, A., Ngeve, S.M., Pape-Lindstrom, P.A., Seidel, S.B., Tuthill, M.C., Yin, Y., & Corwin, L.A. (2017). Broadening Participation in Biology Education Research: Engaging Community College Students and Faculty. CBE-Life Sciences Education, 16(mr1), 1-11.

Dr. Melo-Jean Yap has navigated the community college system as a student, staff, Microbiology professor, and STEM Education researcher. A versatile STEM Education scholar, she is the Principal Investigator for “Influential Networks for Women of Color in STEM Community College Pathways”–a grant funded by the National Science Foundation (NSF DUE # 1937777). She is also a Postdoctoral Research Fellow in NSF-funded grant, ADAPT: A Pedagogical Decision-Making Study (NSF HRD # 1759947) at San Diego State. Her interdisciplinary training has shaped her versatility in using concurrent methodologies to advance research on underrepresented groups in the STEM fields, especially in the community college level. A knowledge broker, Dr. Yap hopes to conduct research that may help empower students and professors, as well as inform STEM praxis and policy. She obtained a Ph.D. in Education from UCLA, a M.S. Biology from California State University, Los Angeles, and double major in B.S. Physiology and B.A. Black Studies (now Africana Studies) from San Francisco State University.

Dr. Melo-Jean Yap has navigated the community college system as a student, staff, Microbiology professor, and STEM Education researcher. A versatile STEM Education scholar, she is the Principal Investigator for “Influential Networks for Women of Color in STEM Community College Pathways”–a grant funded by the National Science Foundation (NSF DUE # 1937777). She is also a Postdoctoral Research Fellow in NSF-funded grant, ADAPT: A Pedagogical Decision-Making Study (NSF HRD # 1759947) at San Diego State. Her interdisciplinary training has shaped her versatility in using concurrent methodologies to advance research on underrepresented groups in the STEM fields, especially in the community college level. A knowledge broker, Dr. Yap hopes to conduct research that may help empower students and professors, as well as inform STEM praxis and policy. She obtained a Ph.D. in Education from UCLA, a M.S. Biology from California State University, Los Angeles, and double major in B.S. Physiology and B.A. Black Studies (now Africana Studies) from San Francisco State University.

Designing Meaningful STEM Educational Digital Media

Matheus Cezarotto, Ph.D., New Mexico State University, Las Cruces, NM

Effective educational digital media gives learners meaningful learning experiences in a safe and interactive environment. For the Learning Games Lab, research is an essential component to create meaningful educational media, addressing the field and users’ needs.

The Learning Games Lab is a university-based development studio, which develops educational games, virtual labs, videos, animations and other interactive tools to help learners of all ages learn from research-informed interventions. As part of NMSU’s Innovative Media Research and Extension department the team partners with research groups, faculty, and programs nationally and internationally to create educational media in various disciplines. The lab has partnered with more than 40 universities, agencies, and nonprofit groups on projects funded by NSF, USDA, DOE, the State of New Mexico, and private funders, and brings 30 years’ experience producing innovative media on many platforms.

Design-based research guides the Learning Games Lab product development. It means that research is made throughout the entire development process and guides the team, informing:

- What kind of change we are seeking for the users. The change is related to the project goals and it can seek to change, for example, users’ knowledge on math pre-algebra, their behavior related to food choices, or their perception regarding careers in STEM. The team works with content experts to identify the desired change in the learner and what kinds of activities result in that change.

- The design process decision and compromises. Our process is participatory and collaborative. Through pilots and user testing sessions, the team gets feedback on early versions of the products, tests some ideas and concepts. This feedback informs the development team to design strategies that better achieve the desired change in the users.

- The impact of the product on the users. Once the product is finished the team investigates the impact of the product, based on the change planned for users in the project. This impact is reported to the client and the academic community.

Some of the lab’s products on STEM education are Math Snacks [1] (https://mathsnacks.org), a series of animations, games, and apps to help middle schoolers better understand math concepts. Virtual Labs[2] (https://virtuallabs.nmsu.edu), interactive labs to help students learn basic laboratory techniques and practice methods used by lab technicians and researchers in a variety of careers. Outbreak Squad [3] (https://outbreaksquad.com), an engaging game designed for grades 5 and above, it is also suitable for youth or adults interested in learning about food safety and foodborne illness prevention. Science of Agriculture [4] (https://scienceofagriculture.org), a series of animations, interactives, and videos to teach math & science concepts crucial to the study of agriculture, specifically targeting college students from Hispanic-Serving Institutions.

Every year the Learning Games Lab provides summer sessions for kids and youth from the community. In these week-long sessions, youth have a chance to build and interact with professionals in the technology and design field, to get exposed to careers related to technology, and learn digital skills, storytelling, collaborative work, critical thinking, and game design. In addition to providing outreach opportunities, these labs serve an important purpose: all participants are actively engaged in user testing products in development.

The lab is always willing to collaborate with faculty, Universities, and research groups. If you would like to work collaboratively on tools for K-12, college students, or adults, please contact our director Dr. Barbara Chamberlin ([email protected]). You can find out more about the lab and products on our website (https://innovativemedia.nmsu.edu).

[1] The Math Snacks project has support from the National Science Foundation (0918794 and 1503507).

[2] The Virtual Labs project has support from USA CSREES and USDA National Institute of Food and Agriculture under two Higher Education Challenge Grant projects: 2008-38411-19055 and 2011-38411-30625.

[3] The Outbreak Squad project has support from the SPECA Challenge Grant, National Institute of Food and Agriculture, U.S. Department of Agriculture, under award number 2015-38414-24223.

[4] The Science of Agriculture project has support from Agriculture and Food Research Initiative Competitive Grants no. 2010-38422-21211 and 2014-38422-2208 from the USDA National Institute of Food and Agriculture and by the President’s Fund at New Mexico State University.

Dr. Matheus Cezarotto is a post-doctoral researcher in the NSU Innovative Media Research and Extension Department. His work focuses on building a research-based understanding of instructional and information design, specifically to design meaningful educational media supporting learners’ variability.

Dr. Matheus Cezarotto is a post-doctoral researcher in the NSU Innovative Media Research and Extension Department. His work focuses on building a research-based understanding of instructional and information design, specifically to design meaningful educational media supporting learners’ variability.

Conducting a Culturally-Relevant Evaluation for your Grants

Kavita Mitapalli, Ph.D., MN Associates, VA

Let me begin with a note of caution. I am not an expert in developing social or cultural theories and/or frameworks. However, as an evaluation practitioner for over 15 years in the United States and having worked in the field with a very wide range of stakeholders within the K-20 sector across the country, I have learned a thing or two on how to ground an evaluation project that is inclusive, culturally-relevant, meaningful, and yields in useful and actionable results. In this article, I share a few thoughts mostly gleaned from my readings, useful resources on the topic, and some shared experiences from the field.

Defining culture and cultural competence

Culture is a cumulative body of learned, shared, and lived behavior, values, customs, and beliefs common to a particular group or society. In essence, culture makes us who we are as humans [1]. It can be described as the socially transmitted pattern of beliefs, values, and actions shared by groups of people.

With respect to evaluation, it affects everything from how a person with limited English proficiency understands and accesses consent forms or understands survey or interview questions, to the appropriateness of survey or interview questions related to the study itself, to the format and context in which data and results are analyzed and presented.

How can evaluation be culturally responsive?

An evaluation is considered culturally responsive when it takes into account the culture of the program, its context, and its people from the beginning. In other words, the evaluation is based on an examination of local mechanisms and (intended) effects/impacts through lenses in which the culture of the participants is considered an important factor. A culturally responsive evaluation also rejects the notion that program assessments must be only objective and culture free, if they are to be unbiased.

An evaluator who conducts a culturally relevant evaluation honors the cultural context in which an evaluation takes place by bringing together the shared lived experiences and common understanding of the people and the places to the evaluation tasks at hand. The evaluation is holistic in nature. Never linear and always context-bound.

Culturally-Relevant Evaluation Framework

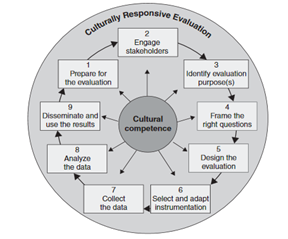

One of the most commonly used CRE framework in our current work so far is the one developed by evaluation experts such Hood, Hopson, Kirkhart (2015), Frierson et al (2010) and Hopson (2009) [2].

According to the scholars, CRE is a holistic lens/framework for centering evaluation in a culture that recognizes that culturally defined values and beliefs lie at the heart of any evaluation work. (Figure).

CRE facilitates deeper conversations, engagement, learning, and real-time continuous development and feedback for data collection, analyses, and reporting that allows open dialogue and improvement. While a traditional data collection process requires the evaluator to position as an outsider to assure independence and objectivity, the CRE approach positions the evaluator as a member of the project planning and implementation team who is integrated into the process of understanding, gathering, and interpreting local context, data, framing issues, and surfacing and testing model developments.

As an evaluator, in addition to using the CRE framework while conducting an evaluation or before that developing a proposal/ responding to an RFP has made them much stronger and grounded. One of the other items that my team and I have found very useful and relevant to our practice is using a short checklist [3] (below) with some adaptations.

| Culturally-Relevant Evaluation Checklist: Task/Activity/Component |

| I include and engage the project’s stakeholders in evaluation planning process(es) |

| I use a variety of sources to learn about the cultural heritage and context of project and its people |

| I seek information to better understand the cultural context of a program and its people |

| At all stages of an evaluation, I examine and reflect the potential impact of my culture, biases, stereotypes around race, ethnicity, gender, socioeconomic status, and others |

| I pay attention to the similarities and differences of life experiences

between the evaluation team and members of the target population, and consider how those dynamics might impact the evaluation |

| I select or adapt data-collection instruments (i.e., surveys, interview protocols, etc.) to ensure appropriateness for the culture(s) of the people of whom the questions are being asked. I also collaborate with the project team/stakeholders to develop the tools and instruments |

| Data-collection activities that require interaction with community

members, consumers, and stakeholders are led by the me/team members who are best suited to understand the specific cultural context, based on factors such as shared/lived experiences with the target population, knowledge of the target population, and awareness of biases. |

| In designing data-collection and analysis plans for answering questions about how the program/project/initiative was implemented, I pay attention to…

…the extent of shared experiences between members of the recipients of the program’s services. …diversity (including demographics and cultural background) of program staff. …hierarchical dynamics between and among the staff that have the potential to impact project success and evaluation outcomes (Power/privilege relationship) …the organization’s historical stance and/or practice related to issues of equity …community context and dynamics and makeup of the community. |

| I assess whether local demographics, socioeconomic factors, cultural factors, and other attributes of the community played a role in the process to define program goals and objectives. |

| In analyzing and interpreting outcome data, I disaggregate data along demographics to identify and assess the extent of differential impacts of the program. |

As our society becomes increasingly diverse racially, ethnically, and linguistically, it is pivotal that program planners/designers, implementers, researchers, and evaluators fully understand, appreciate, and employ cultural contexts in which these programs exist and operate to their work on a

daily basis. To even ignore or nullify the reality of the existence of culture and to be agnostic or unresponsive to the needs of the population within their ecosystems is do the people a disservice, the program in danger of being ineffective, and to put the evaluation in a state of being seriously flawed and meaningless.

[1]https://www.nsf.gov/pubs/2002/nsf02057/nsf02057_5.pdf

[2] Hood, S., Hopson, R.K., & Kirkhart, K.E. (2015). Culturally-Responsive Evaluation: Theory, Practice, and Future Implications. Handbook of Practical Program Evaluation. 4th Ed.

Frierson, H. T., Hood, S., Hughes, G. B., and Thomas, V. G. “A Guide to Conducting Culturally Responsive Evaluations.” In J. Frechtling (ed.), The 2010 User-Friendly Handbook for Project Evaluation (pp. 75–96). Arlington, VA: National Science Foundation, 2010.

Source: https://nasaa-arts.org/wp-content/uploads/2017/11/CRE-Reading-1-Culturally-Responsive-Evaluation.pdf

Hopson, R. K. “Reclaiming Knowledge at the Margins: Culturally Responsive Evaluation in the Current Evaluation Moment.” In K. Ryan and J. B. Cousins (eds.), The SAGE International Handbook of Educational Evaluation (pp. 429–446). Thousand Oaks, CA: Sage, 2009.

[3] Developed by Public Policy Associates and cross listed by http://jordaninstituteforfamilies.org/wp-content/uploads/2018/06/Self-Assessment_6-pages.pdf

NA is a small, woman-owned education research and evaluation firm in Northern Virginia. MNA is headed by Kavita Mittapalli, Ph.D., who brings over 18 years of experience in conducting R & E work for various programs and initiatives across the country. Kavita worked at various consulting firms before founding MNA in 2004. She started her career in Agricultural Sciences before becoming an Applied Sociologist and a mixed methodologist with an interest in research design in the field of education. She brings her multi-disciplinary skills and knowledge to all the work she does at MNA. She is supported by four team members who bring their very diverse backgrounds, academic training, and professional experiences to MNA. To date, MNA has evaluated 31 NSF grants in various tracks in addition to evaluating medium to large grants funded by other agencies (e.g., USDE, DOL, NASA, DODEA, and DOT). Kavita can be reached at [email protected]. Connect with her on LinkedIn (linkedin.com/in/kavitamittapalli) and on Twitter @KavitaMNA.

NA is a small, woman-owned education research and evaluation firm in Northern Virginia. MNA is headed by Kavita Mittapalli, Ph.D., who brings over 18 years of experience in conducting R & E work for various programs and initiatives across the country. Kavita worked at various consulting firms before founding MNA in 2004. She started her career in Agricultural Sciences before becoming an Applied Sociologist and a mixed methodologist with an interest in research design in the field of education. She brings her multi-disciplinary skills and knowledge to all the work she does at MNA. She is supported by four team members who bring their very diverse backgrounds, academic training, and professional experiences to MNA. To date, MNA has evaluated 31 NSF grants in various tracks in addition to evaluating medium to large grants funded by other agencies (e.g., USDE, DOL, NASA, DODEA, and DOT). Kavita can be reached at [email protected]. Connect with her on LinkedIn (linkedin.com/in/kavitamittapalli) and on Twitter @KavitaMNA.

Camera on, Camera off?

Nicolas Mendez, New Mexico State University, Las Cruces, NM

With the COVID 19 outbreak increasing the number of infected and death people all around the globe, and delays in the vaccine distribution plan, the lockdown and restrictions are far from finish soon. In the United States, the situation is not different from the rest of the world; academic institutions are aware of that situation and consider the possibility that many students coming back from the winter break might be infected but are asymptomatic [1]. Given this, most institutions will keep offering classes online and restraining in-person classes to extremely necessary courses like labs or health studies.

A new debate has surged with the online teaching system: should student cameras be turned on? Many students attending several classes, might suffer from fatigue and Zoom exhausting [2]. One example is students from Stanford[3], overwhelmed with the number of online sessions, sent a letter to the Assistant Director for Disability Advising asking for lectures where students can turn off their cameras. This situation was not only from students with disabilities but from students tired from talking to a screen, with personal dynamics at their homes, or students at a different time zone that cannot wake up their families.

Different universities, for example, City University of New York (CUNY)[4], establish a policy that say that students (with the exception of presenting an Exam or a proctored quiz), can turn off their cameras on live sessions, unless the teacher requires them to turn it on and the class agrees with that. On the other hand, Francis Lewis High School[5] creates a policy developed by the Reopening Committee and the School Leadership Team, that states that every student should have their camera on to check the attendance and foster active class participation. This situation creates different points of view, considering that every student lives in a different environment and sometimes does not want to share the privacy of their homes.

At New Mexico State University, although there is not a policy for camera usage, many professors express the requirements in the syllabus or during the first sessions of the semester. During Fall 2020, I experience classes where the professor encourages us to activate the camera and another class where the professor rather have the student’s camera off, to improve the internet connection and avoid distractions from the backgrounds of the students.

The outbreak is still going on and will not leave soon, therefore, decision about cameras on or off should be communicated and discussed by the professor sharing his preference during their sessions and if students approve the settings for the class. It is important not taking for granted that the same solution will work out for every virtual classroom.

[1] Jacobson, S. (2020, December 20). Newsday. Retrieved from What will spring 2021 semester look like?

Nicolas Mendez is a Master’s student in Industrial Engineering. He is from Bogota, Colombia, and completed his Bachelor’s degree at La Salle University in 2018. During his professional career, he has worked in the pharmaceutical industry performing quality control, process analysis, and cost evaluations. He is a member of the Recruitment Team for the Department of Industrial Engineering at New Mexico State University, working with middle and high school students to pursue a career in engineering.

Nicolas Mendez is a Master’s student in Industrial Engineering. He is from Bogota, Colombia, and completed his Bachelor’s degree at La Salle University in 2018. During his professional career, he has worked in the pharmaceutical industry performing quality control, process analysis, and cost evaluations. He is a member of the Recruitment Team for the Department of Industrial Engineering at New Mexico State University, working with middle and high school students to pursue a career in engineering.

Dealing with Stress in Stressful Times

Edmundo Medina, New Mexico State University, Las Cruces, NM

As academics, we are reminded throughout our careers that we need discipline, infinite patience, limitless creativity and to be a pillar of integrity. We are told over and over again that we need to have enough charisma to engage students, have a doer attitude, and above-average problem-solving-skills. According to our administration, even though we are going through a global pandemic and are surrounded by political and social unrest, which has probably impacted our and our families’ wellbeing, we are expected to not only do our work but also support students who are also facing the same stressors. Their advice on how to juggle our own needs and guide students is summed up by “You got this.” Students need our support, and we should be able to give it to them, but when everything seems overwhelming, how can we achieve this?

We all know the importance of prioritizing self-care; we are all too familiar with the positive effects of yoga, exercise, meditation, and taking a walk in nature to help us regain focus. These methods have a positive effect on us, but the stress is greater than ever, so what else can we do to manage? Coping with change is difficult, and living in a world that changed overnight is extremely hard to manage. It is easy to fall into the trap of ignoring our situation or going mindlessly with the flow. Turning a blind eye does not change the fact that anxiety, depression, and insomnia are increasing since February 2020[1]. However, there is good news: there are multiple scientific approaches we can take to manage this newfound stress and uncertainty.

- Make phone calls to loved ones: We can start by connecting and listening to your loved ones’ voice[2], since hearing has a more relaxing effect than texting.

- Keep a Journal: Writing down what we are feeling has an emotional regulation response[3].

- Develop/Keep Healthy Habits: Another technique is developing our self-control; this trait is found in successful people that keep their healthy habits[4]

- Find the Right Coping Mechanism for You: There are different coping strategies that are useful to deal with stress. Identifying the one that works for us can increase your resilience; these coping mechanisms can range from acceptance, positive reframing to religion[5].

At the end of the day, we should ask, am I loving/caring for myself enough? What kind of impact am I making on the people around me?

[1] Marroquín, Brett, Vera Vine, and Reed Morgan. “Mental health during the COVID-19 pandemic: Effects of stay-at-home policies, social distancing behavior, and social resources.” Psychiatry research 293 (2020): 113419.

[2] Seltzer, Leslie J., et al. “Instant messages vs. speech: hormones and why we still need to hear each other.” Evolution and Human Behavior 33.1 (2012): 42-45.

[3] Lieberman, Matthew D., et al. “Putting feelings into words.” Psychological science 18.5 (2007): 421-428.

[4] Kokkoris, Michail D., and Olga Stavrova. “Staying on track in turbulent times: Trait self-control and goal pursuit during self-quarantine.” Personality and Individual Differences 170 (2020): 110454.

[5] Zacher, Hannes, and Cort W. Rudolph. “Individual differences and changes in subjective wellbeing during the early stages of the COVID-19 pandemic.” American Psychologist (2020).

Edmundo Medina holds a medical degree from the Universidad Autónoma de Ciudad Juarez (UACJ), where he found a passion for understanding different biological mechanisms. After working on the clinical side of medicine and as a professor for Medical Physiology, Human Physiology and Patient Management courses, he decided to expand his research skills, finishing an M.Sc. Degree in Genomics at UACJ. For his Master’s thesis, he directed a project in the characterization of an ion channel in a heterologous expression system where he developed expertise with neural cell culture and whole-cell patch-clamp techniques. Currently, he is a Ph.D. candidate in the Department of Biology at New Mexico State University focused on projects that combine his clinical knowledge with neuroscience connectomics, molecular modeling, and lipid analysis.

Edmundo Medina holds a medical degree from the Universidad Autónoma de Ciudad Juarez (UACJ), where he found a passion for understanding different biological mechanisms. After working on the clinical side of medicine and as a professor for Medical Physiology, Human Physiology and Patient Management courses, he decided to expand his research skills, finishing an M.Sc. Degree in Genomics at UACJ. For his Master’s thesis, he directed a project in the characterization of an ion channel in a heterologous expression system where he developed expertise with neural cell culture and whole-cell patch-clamp techniques. Currently, he is a Ph.D. candidate in the Department of Biology at New Mexico State University focused on projects that combine his clinical knowledge with neuroscience connectomics, molecular modeling, and lipid analysis.

Join The HSI STEM Professionals Network!

Update Your Profile Today!

HSI STEM Hub News

Grantsmanship Trainings Now Available

Semillas Mentored Grantsmanship Program Application – Deadline Extended through January 22 – Access application here https://hsistemhub.org/portfolio-item/2021-ganas-mini-grant-program/

Preflight Grantsmanship Certification Series is a training for first-time grant writers as you begin formulating ideas and planning for writing your first grant. Click Here for More Information

New Resources for STEM Pedagogy – Click here for more information

Webinar Recordings

An Overview of the NSF HSI STEM Program Solicitation – Oct 2, 2020, Moderated by: Martha Desmond & Delia Valles, HSI STEM Hub & Nora Garza, TxHSIC

NSF HSI Program Director, Dr. Erika Camacho, and NSF DUE Program Director, Dr. Jennifer Lewis provide a general overview of the updates to the solicitation and provide guidance on the new application for proposals followed by a Q & A session to answer your questions. Click here for more information

Grant Writing Help Sessions On Zoom – Nov 6, 2020

Dr. Antonio Garcia, NSF HSI Program Awardee, will join Dr. Delia Valles as our guest panelist on November 6, 2020 for a Zoom Help Session for preparing your proposal for submission to the new HSI Program Solicitation (NSF 20-599). Click here for more information

STEMversity the Podcast hosted by President Monica Torres- Guest panelist, Dr. Alex Racelis from the University of Texas Rio Grande Valley, studies ecological interactions in the social, political, and economic contexts in which they occur. In this interview, Dr. Torres and Dr. Racelis discuss institutional capacity building for HSIs and the communities we serve, culturally relevant STEM pedagogy and practices, community-based research, teaching at Minority Serving Institutions, the important role of HSIs in the national and local landscapes, and the origin of the HSI designation. Click here for more information

HSI Community News

Access our community boards to share important opportunities and information with HSI STEM Professionals Network members. We have an HSI News Archive. You can access the information here. Submit your news to [email protected].

AAAS has new pieces of training available- Click here for more information.

Column Editors

EDMUNDO MEDINA

Edmundo Medina holds a medical degree from the Universidad Autónoma de Ciudad Juarez (UACJ), where he found a passion for understanding different biological mechanisms. After working on the clinical side of medicine and as a professor for Medical Physiology, Human Physiology and Patient Management courses, he decided to expand his research skills, finishing an M.Sc. Degree in Genomics at UACJ. For his Master’s thesis, he directed a project in the characterization of an ion channel in a heterologous expression system where he developed expertise with neural cell culture and whole-cell patch-clamp techniques. Currently, he is a Ph.D. candidate in the Department of Biology at New Mexico State University focused on projects that combine his clinical knowledge with neuroscience connectomics, molecular modeling, and lipid analysis.

NICOLAS MENDEZ

Nicolas Mendez is a Master’s student in Industrial Engineering. He is from Bogota, Colombia, and completed his Bachelor’s degree at La Salle University in 2018. During his professional career, he has worked in the pharmaceutical industry performing quality control, process analysis, and cost evaluations. He is a member of the Recruitment Team for the Department of Industrial Engineering at New Mexico State University, working with middle and high school students to pursue a career in engineering.

MARGIE VELA

Margie Vela is a researcher, educator and public servant devoted to diversity, equity and inclusion (DEI) in higher education and STEM. She earned her Ph.D. in Water Science and Management from New Mexico State University (NMSU) in 2019 and served the State of New Mexico in public service as a Regent for the NMSU System from 2017-2019. She served as an intern at the National Science Foundation in 2015 and as a Farmer-to-Farmer USAID volunteer in 2018. Her career in DEI began at Fort Lee Garrison, where she served as Director for the HIRED! Program to prepare dependents of military personnel to enter college or the workforce. Her career in higher education began at a Historically Black University, in 2010, where she implemented a multi-million-dollar program focused on diversifying the STEM enterprise. Currently, Dr. Vela serves as Senior Project Manager for the NSF HSI National STEM Resource Hub working to implement a project aimed to bolster STEM at 539 Hispanic Serving Institutions in grantsmanship, multicultural awareness, institutional capacity building and STEM pedagogy: and facilitating partnerships across institutions and disciplines. Dr. Vela serves the NMSU Society for the Advancement of Chicanos/Hispanics and Native Americans in Science (SACNAS) Chapter as founding co-advisor and has served as a national panelist for SACNAS in DEI training as an alumnus of SACNAS Postdoctoral Leadership Institute. She recently earned a Certificate in DEI from Cornell University.